Today, we’re going to learn how to setup Blender to render a full-immersion 3D video and upload it to YouTube. We’ll start by covering some gear to preview your videos (with links to Amazon), quickly fabricate a scene, configure Blender for 3D output, do the required prep, and finally upload to YouTube. Nothing we do here is particularly novel or difficult, but it will hopefully save you some time in making videos of your own.

Here’s a preview of the finished product. Open in the YouTube app to view as a Cardboard video.

Direct link:

https://www.youtube.com/watch?v=4QP9vHB7-Rw

Embed:

And the .blend file:

https://drive.google.com/file/d/0BxkijDBoaFrmd2Rua0k1NnZIeGc/view?usp=sharing

- Motivation

- Preview gear

- Setting up scene

- Render settings

- Camera settings

- Output settings

- YouTube Prep

- YouTube Upload

If you’re on the market for something cheaper and Prime ready, this is also an option:

Again, any cardboard device will do. Here is a lengthy Amazon list of cheap devices.

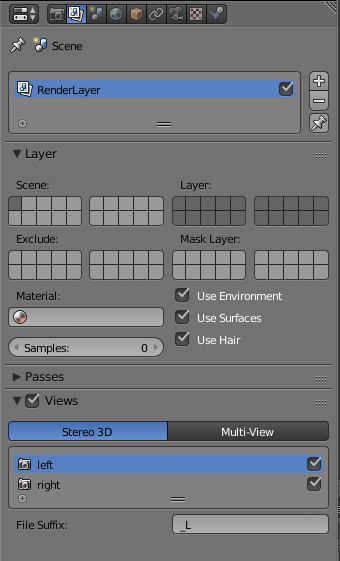

Next, open up the “Render Layers” section on the right side and check “Views” at the bottom.

By default, Stereo 3D provides left and right views. We’re done with this pane.

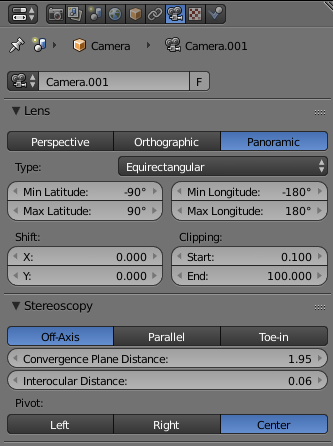

Convergence Plane Distance and Interocular distance can remain the same.

Set the pivot to whatever you’d like. I prefer ‘center’.

Your camera tab should look like this:

Device: GPU Compute! Don’t forget this! You _can_ use your CPU to run Cycles, but it’s going to take a lot longer.

Output: MPEG (Needed for Google’s Metadata tool.)

Views Format: Stereo 3D

Stereo Mode: Top-Bottom (or left-right, but I like top-bottom because it’s easier to view the video before upload.)

Encoding:

Format: MP4 (Needed by YouTube’s tool.)

Codec: H.264

Audio Codec: AAC

Then set your start frame/end frame and resolution. Mash render. You settings should look like this:

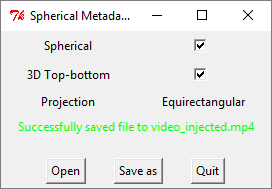

Download the YouTube 360-Degree Video Tool here: https://support.google.com/youtube/answer/6178631?hl=en

Unzip it and fire it up.

Open the video you just created. Check ‘spherical’ and ‘3D Top-bottom’.

‘Save as’ and place your newly created file wherever you’d like.

Upload the newly created video to YouTube as you would any other.

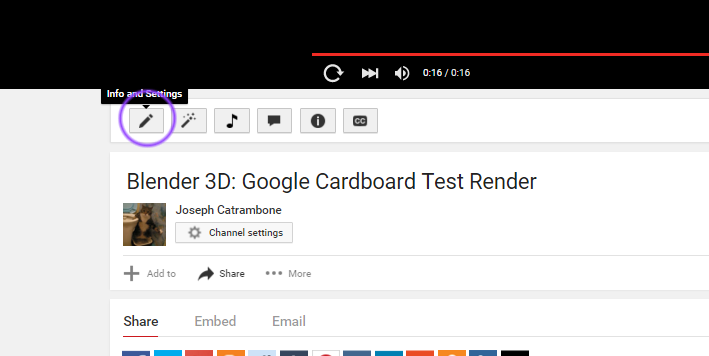

When completed, go to ‘Info and Settings’ on the video page.

Select the ‘Advanced Options’ tab and check “This Video is 3D.” Select the Top-Bottom option and save your changes.

That’s it! Now you should be able to view your 3D video in browser or in Cardboard on your device.